|

Innovation is like a playground with endless possibilities, and Broadcom and VMware are teaming up to create the ultimate tech amusement park! Think of Broadcom as the roller coaster, with its organic growth and acquisition adventures, built upon the legacy of tech pioneers like AT&T Bell Labs and Hewlett Packard. Together with VMware, they're on a mission to take virtualization technology to new heights! Broadcom recently stated they are so committed to innovation that they're splurging an extra $2 billion annually to unlock customer value. Half of that goes into mind-blowing research and development (R&D), while the other half is about turbocharging VMware solutions deployment through professional partner services like CDW. It's like giving virtualization a jetpack! Picture this: enterprises constructing their own next-gen software-defined data centers, either on-premises or in private clouds. No more being held hostage by mixed cloud environments! With Broadcom's help, VMware's technology will become even easier to use, combining the productivity, efficiency, and resiliency of public clouds with the convenience of on-prem and private cloud environments. It's a virtualization extravaganza! But wait, there's more! Broadcom wants everyone to enjoy the multi-cloud party. They're extending VMware's software stack to run and manage workloads across private and public clouds. Imagine effortlessly running your applications anywhere you please, with a smooth transition from on-prem to any cloud platform you fancy. It's like having a personal cloud party planner! Broadcom is bringing the fun to VMware's professional services capabilities. They're investing in external partners and professional services support with the likes of CDW and others to make sure enterprises can deploy private clouds like a pro. With Broadcom's backing, VMware can now support more customers, team up with global system integrators, and double down on professional services. It's like the tech equivalent of a grand expansion pack! Broadcom's business model is all about the evolution of technology. They believe that by constantly improving what they do best, they can create a roadmap to greatness. And when Broadcom unlocks VMware's potential, the tech industry and customers everywhere will reap the rewards. It's like opening a treasure trove of innovation! So buckle up, tech enthusiasts, because Broadcom and VMware are joining forces to create an incredible tech adventure, where the possibilities are endless, the innovation is thrilling, and the fun never stops! Let's ride the wave of innovation together!

0 Comments

vSphere 8.0 Update 1 is the latest release of this platform, and it comes with a host of new features and capabilities that enhance the efficiency and reliability of IT operations.

One of the key new features is the vSphere Configuration Profiles, which allows you to manage ESXi cluster configurations by specifying a desired host configuration at the cluster level. This means that you can define a set of configuration settings that you want all hosts in a cluster to conform to, such as firewall rules, user accounts, and network settings. You can then automate the scanning of ESXi hosts for compliance to the specified desired configuration and remediate any host that is not compliant. This helps you ensure consistent and secure configurations across your infrastructure. To use vSphere Configuration Profiles, you need to use vSphere Lifecycle Manager images to manage your cluster lifecycle, a vSphere 8.0 Update 1 environment, and either an Enterprise Plus or vSphere+ license. vSphere 8.0 Update 1 also adds support for various technologies such as NVIDIA BlueField-2 DPUs to server designs from Lenovo and Dell, AMD Genoa CPU-based server designs from Dell, and UPTv2 for NVIDIA BlueField-2 DPUs. It also removes the requirement that all vGPUs on a physical GPU must be of the same type and allows you to set different vGPU profiles, such as compute, graphics, or Virtual Desktop Infrastructure workload, on one GPU to save cost by higher GPU utilization and reduced workload fragmentation. Another major enhancement in vSphere 8.0 Update 1 is the integration of VMware Skyline Health Diagnostics with vCenter. This self-service diagnostics platform is integrated with the vSphere Client and allows you to detect and remediate issues in your vSphere environment. Additionally, vSphere 8.0 Update 1 introduces VM-level power consumption metrics, which allows vSphere admins to track power consumption at a VM level to support the environmental, social, and governance goals of your organization. vSphere 8.0 Update 1 also adds support for NVSwitch, which enables you to run high-performance computing (HPC) and AI applications such as deep learning, scientific simulations, and big data analytics, which require multiple GPUs working together in parallel. Moreover, vSphere 8.0 Update 1 allows you to use third-party identity security provider Okta to log in simultaneously to vCenter and NSX Manager by using the same token and password. Other enhancements in vSphere 8.0 Update 1 include support for Fault Tolerance of virtual machines that use a virtual TPM (vTPM) module, Quick Boot support for servers with TPM 2.0 chips, vSphere API for Storage Awareness (VASA) version 5 for vSphere Virtual Volumes, sidecar files become regular files in Config-vVol instead of vSphere Virtual Volumes objects, increased default capacity for vSphere Virtual Volumes objects of type Config-vVol, and NVMe over TCP support for vSphere Virtual Volumes. Finally, vSphere 8 Update 1 adds support for NVMe over TCP for vSphere Virtual Volumes. NVMe is a protocol for accessing non-volatile memory, such as solid-state drives (SSDs), over a high-speed interface. With NVMe over TCP, you can use this high-speed protocol to access storage over a standard TCP/IP network, providing faster performance and lower latency than traditional storage protocols like iSCSI or NFS. This can help you improve the performance of your storage-intensive workloads, such as databases or big data analytics. VMware vSphere 8.0 Update 1 is a major upgrade that offers many new features and capabilities that improve the efficiency and reliability of IT operations. As more organizations move their workloads to the public cloud, managing costs can become a significant challenge. I am seeing more and more customers moving to the hybrid-cloud or multi-cloud models. This brings its own challenges and costs is a top concern. I've been working with a customer to compare these two options and thought I would share my thoughts and understanding of these two popular cost optimization solutions: VMware Aria Cost and NetApp Spot. I will explore the benefits of each solution and help you determine which one might be better suited to your needs. What is VMware Aria Cost (Powered by CloudHealth)?VMware Aria Cost is a cloud cost management solution that helps organizations monitor and optimize their cloud spend. The solution provides a comprehensive view of cloud costs across multiple clouds and accounts, assisting organizations in identifying and reducing unnecessary spending. This is very powerful in that you can tie in one or multiple public clouds to gain visibility. VMware Aria Cost helps organizations to:

VMware Aria Key Capabilities

I have heard many different customer issues regarding Citrix as of late. They are wondering what is happening with the direction of Citrix these days. Citrix has made some announcements and directional changes that are affecting their customers and not always in a positive way. So, I thought I would give my two cents on comparing the two platforms and, for those looking to make a move, discuss what a migration strategy should outline. As virtualization technology, VMware Horizon and Citrix are two of the most popular solutions available on the market today. While both platforms have similar functionality, some key differences make VMware Horizon a better choice for businesses looking to streamline their virtualization processes. Superior Virtual Desktop Infrastructure (VDI) TechnologyOne of the most significant differences between VMware Horizon and Citrix is their approach to VDI technology. While Citrix offers a VDI solution, VMware Horizon has developed a more cutting-edge integrated technology solution. It provides a more flexible, scalable, and secure approach to desktop virtualization, making it a superior choice for businesses that manage many virtual desktops. With Horizon, users can access their virtual desktops from any device, anywhere, and anytime, without compromising security. Greater Integration CapabilitiesAnother area where VMware Horizon outperforms Citrix is in its integration capabilities. VMware has developed a robust ecosystem of solutions that can be seamlessly integrated with Horizon. These include cloud management platforms like VMware Aria Suite and VMware Cloud Director, VMware Workspace One and network virtualization solutions like NSX. These integrations enable businesses to create a more comprehensive and cohesive virtualization strategy that meets their unique needs.

On the other hand, Citrix relies on a more fragmented approach to integration, often requiring businesses to use multiple solutions from different vendors to achieve the same functionality. This can lead to increased complexity, cost, and potential compatibility issues. Having a clear understanding of an organization's technology landscape and how technology can help achieve business goals is crucial in today's rapidly changing business environment. To ensure alignment between technology and business strategy, organizations need an Enterprise Architecture (EA) group. An EA group is responsible for defining and managing an organization's technology architecture, making sure it supports strategic goals. In this article, we'll explore the importance and benefits of building an EA group and provide guidance on how to establish one within an organization. Where to BeginWhen it comes to building an EA group, it's important to start with a clear understanding of your organization's business strategy and goals. This will help you identify the technology capabilities that are needed to support those goals and develop a roadmap for building out your EA capabilities.

Defining Strategic Goals Defining strategic goals is a critical step for any organization in achieving its long-term success. Here are some common steps that companies take to define their strategic goals:

Once you have a clear understanding of your organization's strategic goals, you can begin to identify the stakeholders who will be involved in the EA group and define their roles and responsibilities. This may include business leaders, IT leaders, architects, and other key stakeholders. Next, you'll want to develop an EA framework that outlines the principles, standards, and guidelines that will govern your organization's technology architecture. This framework should be aligned with your organization's strategic goals and should provide guidance for technology teams on how to develop and implement technology solutions that support those goals. Some Examples or EA Frameworks are the following:

The most popular Enterprise Architecture (EA) framework is the Open Group Architecture Framework (TOGAF). There are a few reasons why TOGAF is so widely used: I am really not sure how to start this blog. This one is different from all my previous blogs about technology. To be honest, I’m feeling a little scared, but I feel like this will be cathartic for me and maybe, in some way, reach someone going through what I did. So, with a deep breath, here I go.

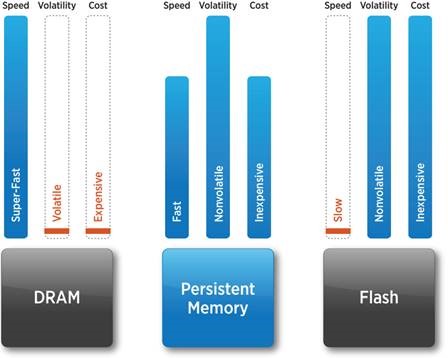

Let me start with a bit of background. Before covid hit, I was very active in different tech communities. You see, I love what I do, and I love technology. I love to learn and share new ideas with others. I love to teach and mentor. I love seeing the impact on someone’s career that I may have played a small part in shaping. I love interacting with other peers within the industry and learning from them. I have made many friends through these communities. One in particular that I am passionate about is the VMware User Group or VMUG. I have had the honor of being a leader in this community for ten-plus years now. VMware reshaped my career path, and I’ll never forget the first time I was able to dive into their virtualization platform back around 3.x. Now, don’t get me wrong, this isn’t meant to be a VMware kiss-butt blog, but the impact they had on my career drove a deep excitement to share what I was learning with others in this community, and the same can be said today. This passion was felt within the community, and we grew. At one point, I led multiple VMUGs within Central New York and blogged at every chance I could. Others noticed this passion, and I took on a new role as a pre-sales architect. I could now speak to customers about this passion and so many others. At one point, I even took on the role of Citrix User Group Leader and Veeam User Group Leader. I was onsite with customers almost daily and planning the subsequent community events with my peers. While at Sirius, now CDW, I took on the VMware Technology Brand Owner role. I traveled all over the country, teaching pre-sales engineers, post-sales delivery, and client executives about our strategy and how to grow their VMware business. I loved every minute of it. I felt alive and passionate, and I felt valued. My family at home supported me, and when I was home, I made the most of my time with them to ensure they felt valued. I have some of the most amazing children. My oldest loves horses: my middle son lives, eats, and breathes hockey. My youngest daughter lives on Jesus and sugar. She never walks but dances her way through life. My Wife is a literal saint. I couldn’t do what I do without her tremendous support and encouragement. You must be wondering what in the world my point is. Brandon, it sounds like life is peachy, and everything sounds great. You have a successful career and a healthy family. It's that time again, to begin the process, that probably should have been started a while ago, which is upgrading your virtual infrastructure to vSphere 6.7. The end of general support for vSphere 6.0 is March 12, 2020, and if you are on an earlier version of vSphere, then you are currently running an unsupported version of vSphere and may also need to purchase to new hardware to support the latest version. I would like to begin this blog with some of the stated benefits to upgrading your environment. BenefitsThe new vSphere 6.7, vCenter appliance delivers major performance improvements from previous versions. First, vCenter Server has 2x faster performance in operations per second. This means better response times for the daily tasks you perform. There is a 3x reduction in memory usage and also 3x faster operations relating to VMware vSphere Distributed Resource Scheduler. If you would like more detail on these improvements, you can find the details in this blog by VMware. New Features and EnhancementsThere are a lot of great new features and enhancements in the latest version of vSphere and if you are still on and older version than vSphere 6, then there are even more that came with vSphere 6.7. Below is a list of new features relating to vSphere 6.7. vSphere Quick Boot vSphere Quick Boot innovation restarts the ESXi hypervisor without rebooting the physical host, skipping time-consuming hardware initialization. Trusted Platform Module (TPM) 2.0 vSphere 6.7 adds support for Trusted Platform Module (TPM) 2.0 hardware devices for ESXi hosts and also introduces virtual TPM (vTPM) 2.0 for VMs, significantly enhancing protection and ensuring integrity for both the hypervisor and the guest operating system (OS). This capability helps prevent VMs and hosts from being tampered with. For virtual machines, vTPM 2.0 gives VMs the ability to use enhanced guest OS security features sought by security teams. Encrypted vMotion vSphere 6.7 also improves protection for data in motion by enabling Encrypted vMotion across various vCenter Server instances as well as versions. This makes it easy to securely conduct data center migrations or to move data across a hybrid cloud environment—that is, between on-premises and public cloud—or across geographically distributed data centers. Microsoft Virtualization-Based Security (VBS) vSphere 6.7 introduces support for the entire range of Microsoft virtualization-based security technologies introduced in Windows 10 and Windows Server 2016. In 2015, Microsoft introduced virtualization-based security (VBS). We have worked very closely with Microsoft to provide support for these features in vSphere 6.7. vSphere Persistent Memory vSphere Persistent Memory, administrators using supported hardware modules such as those available from Dell EMC and Hewlett Packard Enterprise can leverage them either as super-fast storage with high IOPS or expose them to the guest OS as nonvolatile memory (NVM). vCenter Server Hybrid Linked Mode

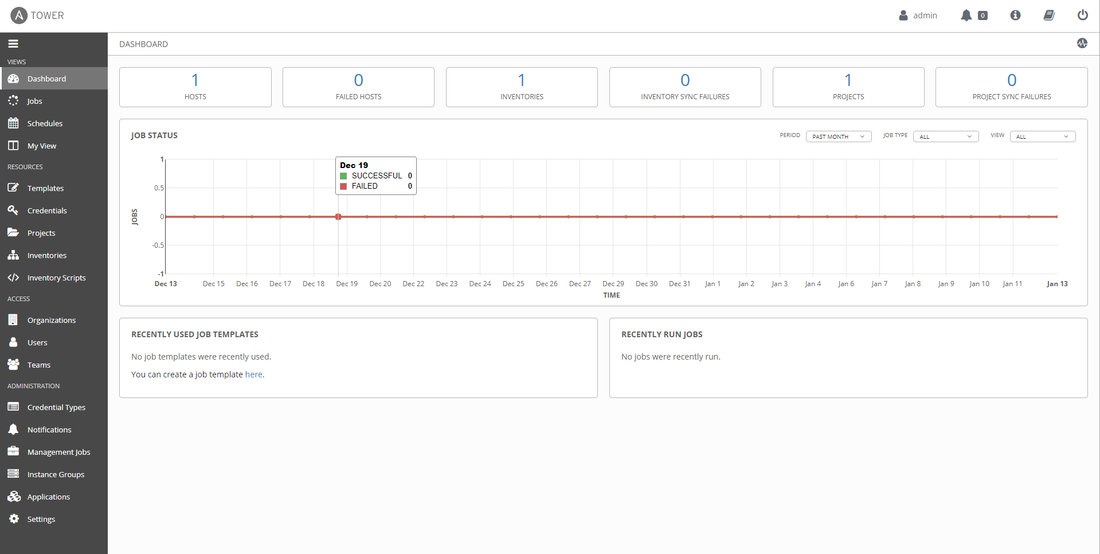

vSphere 6.7 introduces vCenter Server Hybrid Linked Mode, which enables users to have unified visibility and manageability across an on-premises vSphere environment running on one version and a public cloud environment based on the vSphere platform, such as VMware Cloud on AWS, running on a different vSphere version. Per-VM Enhanced vMotion Compatibility (EVC) vSphere 6.7 introduces per-VM Enhanced vMotion Compatibility (EVC), a key capability for the hybrid cloud that enables the EVC mode to become an attribute of the VM rather than of the specific processor generation it is booted on in the cluster. Simplification of the architecture One significant change to vCenter Server Appliance 6.7 is a simplification of the architecture and a reversion to running all vCenter Server services on a single instance. With the introduction of vCenter Server with embedded Platform Services Controller instance with Enhanced Linked Mode. This blog is an exploration of the Ansible Tower interface, but before I dive in, let's begin with an overview of what Ansible is. Ansible is a software provisioning, configuration management, and application deployment tool that is also open-source from Red Hat. Ansible assists IT with the major challenge of enabling continuous deployment (CI/CD) with no downtime. With Ansible IT organizations can automate the provisioning of applications, manage systems, and reduce the complexities that come with trying to automate IT. With Ansible we can break down silos and create a culture around automation. My thought has always been that if you need to preform a task more than once then it should be automated. Ansible integrates with the technologies you have already made investments within your organization, from infrastructure, to networks, security, cloud, containers, and applications. We all have infrastructure whether it be physical bare metal environments like networking with Cisco, Juniper, and Arista, to storage with products like Net App, and Pure Storage. Virtual infrastructure with VMware is also supported along with Red Hat Virtualization(RHV), and Xenserver. Through Ansible organization can easily provision, destroy, take inventory, and manage across all virtual environments. Regardless of of platform, Ansible can help organizations with managing the installation of software, system updates, configuration, and managing system features. Ansible Tower brings a web-based UI to Ansible which makes it a little easier for IT to perform the above mentioned tasks. Ansible Tower is the hub, of sorts, that gives IT a role-based access control, including control over the use of securely stored credentials for SSH and other services. Let's take a few minutes to look at the Ansible Tower interface. Ansible Tower InterfaceOn the left hand side of the Dashboard, you can see the resources menu and the objects that you can create.

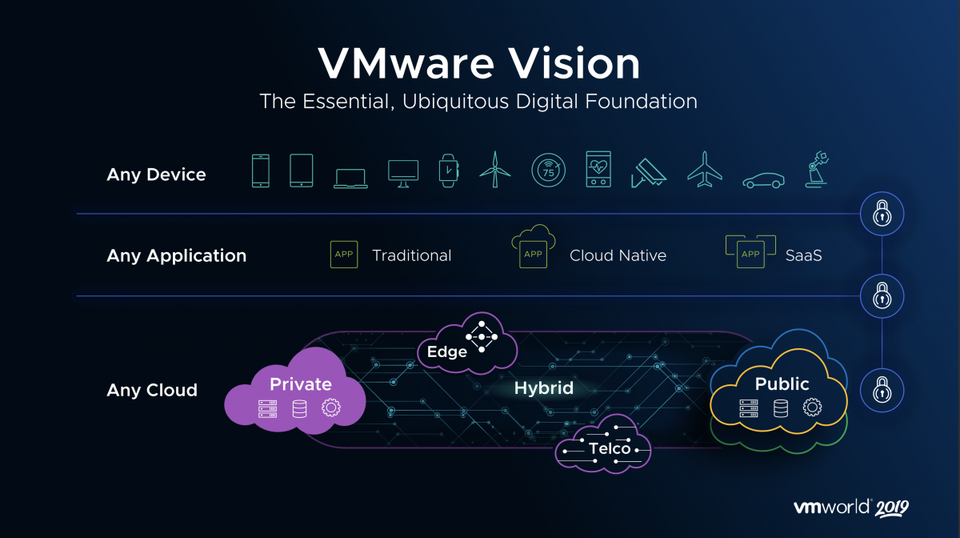

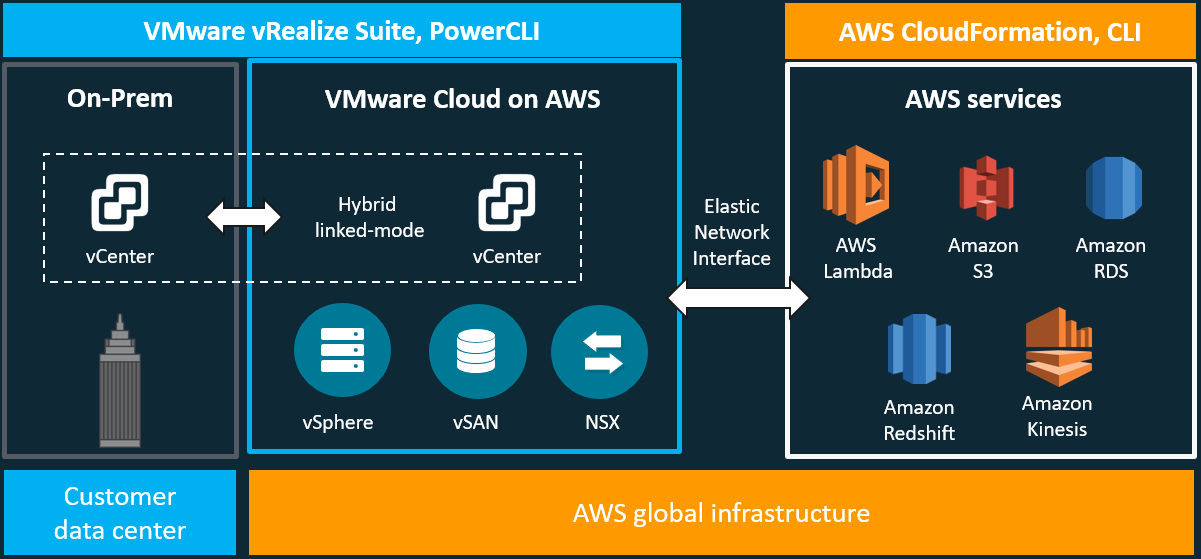

Let us dive a little more into each section beginning with Credentials. In this section, you create a credential that Ansible can use to authenticate to the target hosts. I wrote a blog about this subject before, which can be found here. The information contained in that blog is still relevant to this conversation and walks you through the challenges for traditional three-tier architecture and how the industry, specifically VMware, has addressed those challenges. In this blog, I will be updating the vision that VMware has laid out for the hybrid-cloud, which is comprised of VMware Cloud on AWS and VMware Cloud Foundations. To better understand this journey and how we have arrived at this vision of Any Device, Any Application, and Any Cloud, take a look back at the previous blog. Let's begin with an overview of VMware Cloud on AWS. Quick Overview of VMware Cloud on AWSVMware Cloud on AWS is a jointly engineered and integrated cloud offering developed by VMware and AWS. Through this hybrid-cloud service, organizations can deliver a stable and secure solution to migrate and extend their on-premises VMware vSphere-based environments to the AWS cloud running on bare metal Amazon Elastic Compute Cloud (EC2) infrastructure.

VMware Cloud on AWS has several use case buckets that most customers find themselves falling into some overlap. The first of these use cases is for organizations looking to migrate their on-premises vSphere-based workloads and to extend their capacities to the cloud with the data center extension use case. The next, is for organizations looking to modernize their recovery options, new disaster recovery implementations, or organizations looking to replace existing DR infrastructure. The last one that I will mention, is for organizations looking to evacuate their data centers or consolidate data centers through cloud-migrations. This is great for organizations looking at data center refreshes. VMware Cloud on AWS is delivered, sold, and supported by VMware and its partners like Sirius Computer Solutions, a Managed Service Partner. Available in many AWS Regions which can be found here and growing. Through this offering organizations can build their hybrid solutions based on the same underlying infrastructure that runs on VMware Cloud on AWS, VMware Cloud Foundations. Day 1 began with the general session, which was a lot different than the previous year where the VMware Executives laid out their vision for the partner community. This general session was focused more correctly on the audience in attendance.

|

RecognitionCategories

All

Archives

April 2024

|

RSS Feed

RSS Feed